In my previous post, I reviewed the current performance of available microscope control applications like Metamorph, NIS Elements, and Micro-Manager. Of course, using the Metamorph software device streaming function produced a hands-down winner, if you are looking for a simple commercial solution. On the other hand, many researchers are using the Micro-Manager software package, which is free, and quite fast considering it’s free. But what if you want an even greater speed, while using Micro-Manager? This is where stand-off controllers, like my device, the Triggerscope, or others, like National Instruments cards, or the Esio controller, may be considered.

Each option has it’s own advantages and disadvantages, but you really won’t go wrong with any of them. Deciding to work with triggering is a bit of an educational hurdle, but will allow you to become a far more powerful imaging jedi, as knowing how to make stuff work quickly can greatly expand the possibilities in your imaging!! So – even if you are considering one of the competing products available vs. the triggerscope, I say no matter which way you choose, a stand-off controller is superior in it’s inherent capability compared to PC based control. Why?

- Reliable: PC based control requires drivers, drivers require a lot of development work, and they live inside the operating system’s environment, that environment is always changing (available RAM, processor time, etc) and is competing with other applications for resources. A stand-alone controller has a relatively static set of code in firmware, which basically either runs or won’t run at all. It’s a LOT more stable and reliable, not to mention that a windows OS update won’t blow it up!

- Inherently Open: With voltages and TTL signals, you are free as the owner of such a device to basically drive anything you want. Filter wheels, lasers, piezo focusing modules, galvo mirrors, stepper motors, really anything you can imagine. This is not the case with software-specific device drivers.

- Fast: While applications like Metamorph are quite fast, even meta sits underneath the stack of the operating system, and is therefore limited by the resources and access the OS will provide. microcontroller based solutions operate at the base level of the OS stack, or as low as you can get without using an FPGA. So these devices operate basically as quickly as it’s practically possible to operate.

Here’s a video I recorded recently demonstrating the speed potential for a stand-off controller.

Control Modes

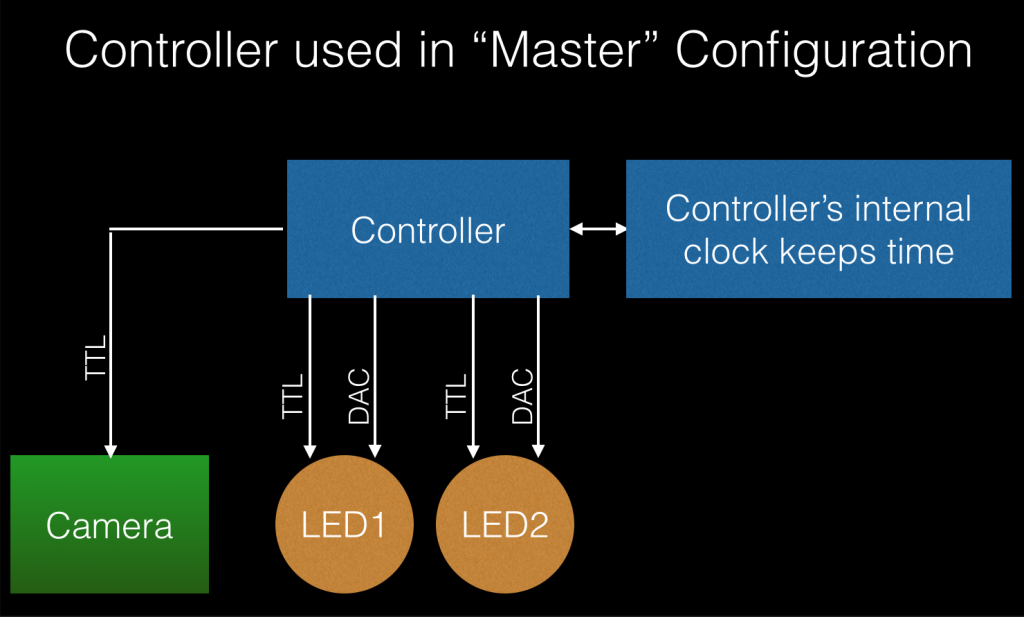

Generally speaking, there are 2 ways one can use a stand alone controller with an existing microscope. For this example, let’s assume we are using a TTL controlled LED, and that we also have analog control over the intensity of the LED.

Master Control – In a master control mode, the controller will be used as the core clock for other devices in the system, thus the master will direct other devices to do things. In this example, the Triggerscope would run a program wherein any time latency or delays, would be pre-rpogrammed by the user. The user, or software in the computer, would tell the program to “ARM”. Operation sequence would look as follows:

- Software sends “ARM” to controller

- Controller runs program # 1 which is stored in memory

- DAC’s, TTL’s are updated using saved stuff inside program 1

- If a delay is added to program 1, a wait for the delay duration is executed

- With program 1 complete, controller runs program 2, and basically repeats # 2 above.

- Operations 2&3 are repeated until the maximum program # is run.

- Controller reports that it’s done working to the host computer

Here’s a block diagram showing this configuration

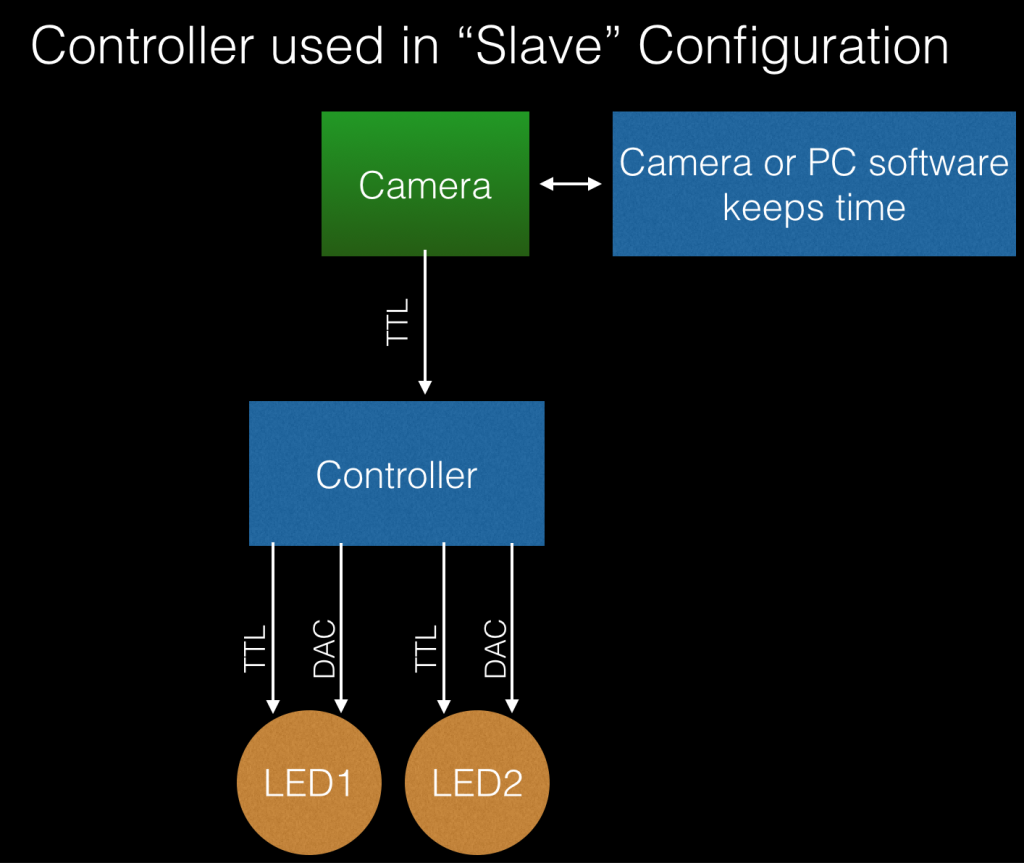

Slave Control – In this configuration, the controller has a list of programs to run, but will wait to run each line based on the camera’s input. This can be useful when the camera may have a long timelapse due to exposure, or when the microscopy software may intermittently control the camera, based on other inputs. (for instance, maybe the software is driving an XY stage, and will expose the camera after stage movement is complete). Operation for such a setup would look like this:

- Controller is sent an ARM command from software

- Controller waits to run program 1

- Camera sends TTL pulse or high to controller

- Controller runs program 1

- Controller updates DAC and TTL lines as specified in current program line, stored in memory.

- If a wait value is specified, controller waits for the specified duration

- When program is complete, controller returns to step 2, waiting for next program to run after trigger in.

- Steps 2-4 repeat until last program specified has been run.

Here’s a block diagram of this configuration:

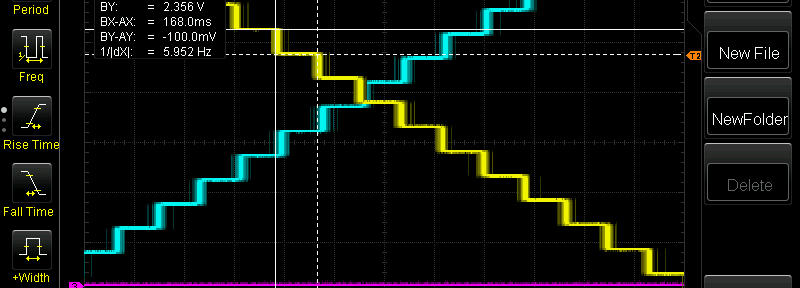

So how fast can a system like this run? Here’s a screen capture from a recent oscilloscope run. This was using my generation 2 triggerscope PCB. In this example, there’s about 1.4ms of total system latency, meaning that a maximum speed of 1000/1.4 = 714fps is theoretically possible. In the screen capture below, the yellow line is a DAC line, the blue line is a second DAC line, and the magenta line is a TTL line.

Let’s investigate this a bit further. In the above sequence, the Triggerscope was configured for master mode, with 10ms delays per line. the 2 DAC’s were updated and the TTL was controlled during the operation of a total of 4 program lines. If we look a bit closer at this graph, we can measure the width of these signal changes:

For each step in the program list, we can measure ~ 11.3 or 11.4ms of total delay. Why is this 1.4ms of delay present? It turns out that in this particular IC used for DAC, the communication method used, and the response time, adds up to ~ 1.4ms. This can be seen by viewing a comparison of the DAC line change vs. the faster, and direct, TTL line change:

For the above example, we can see a measurement of 500uS. This delay is the delay time of the TTL line, over our specified 10ms wait on the program. What this shows is that the controller can operate at ~ 2000FPS, but this particular DAC requires an additional 1ms to operate.

Conclusions

Standoff control systems like the Triggerscope require a bit home homework to set up, but I hope the examples above prove the benefit of such systems. Very high speeds can be achieved, or rather, speeds far faster than most cameras can capture light at! Additionally, the open nature of these systems allows end users to set them up for a wide variety of uses, from light, to fluid delivery. Best of all, these controllers are essentially “crash-proof”, eliminating the commonly experienced random crashes found with typical microscope control software.

Comments

2 responses to “High Speed Microscope Automation using stand-off controllers”

A pet peeve: the SI abbreviation for second is a lower case s, not a capital S (the capital as is the siemens, a unit of conductance).

fixed – thanks for catching that Kurt!